A little while ago we released our Paid Content product after two consecutive six week sprints. The first six weeks were spent creating the MVP, and the second six weeks were spent polishing it up.

This is the breathless tale of those first six weeks.

Part 1: Research

This whole thing began because we saw that our clients were struggling. Paid content, sponsored content, native content – whatever you call it – remains a mysterious beast for many folks and there were few options for measuring paid content performance, let alone figuring out what you can do to make it better.

For the first two weeks of our sprint we researched and brainstormed. We huddled into offices and littered the white boards with ideas, questions, diagrams, and whatever else we could to make sense of things. We’d pour over data to see what insights we could glean and what information would be helpful to know for any native content campaign. It was a lot of debating and arguing, breaking for lunch, and then regrouping for more debating and arguing.

Amidst these debates we talked to existing clients. A lot. We wanted to know how they created paid content campaigns, and what pain points they have experienced. We’d invite them into the office and talk to them on the phone. We’d visit them in their office and pummel them with questions, searching to find what we could do to improve how they analyzed paid content performance.

Part 2: Design

As we were assessing the type of data our clients needed, we also began to design our version of better.

As we were assessing the type of data our clients needed, we also began to design our version of better.

We’d create one mock-up, show it around, gather feedback, and iterate. We’d see what worked in a design and what didn’t. We’d toss out the bad, toss out some of the good, and try again. We moved swiftly, for time was against us.

Something that proved to be a great success were clickable mocks. Typically a mock is static. With a static mock you can cycle through a list of images to give a sense of what the product will contain, but a clickable mock allows you to simulate how the product will feel when it’s complete.

These clickable mocks proved insanely helpful when discussing the product to clients. It enabled us to show our ideas and direction rather than just tell.

Part 3: Development

With under three weeks left to go in the cycle we knew we had to hustle.

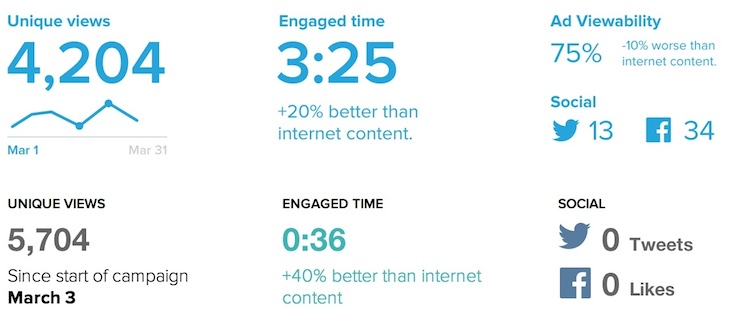

We wanted to see what the data for a real paid content campaign looked like so we worked towards getting things working on the screen as fast as possible. Despite all our planning and designing we had yet to see real data for a paid content campaign and we had concerns that we had planned and designed for data points that may not exist. It’s fine to plan to include data about the amount of Twitter activity that drives traffic to a piece of native content, however if that value is always 0, it’s not helpful.

To our relief our planning paid off. The data worked and made sense. From there on out it was a sprint to bring all the beautiful designs to life.

Part 4: Launch

With the launch of our MVP looming we knew we’d have to start making some hard decisions. Everything we wanted to include for the first version would not fit, so out came the knife as we looked to see what we could cut away.

With the launch of our MVP looming we knew we’d have to start making some hard decisions. Everything we wanted to include for the first version would not fit, so out came the knife as we looked to see what we could cut away.

Delicately we began to inspect what was left. We began to weigh things, deciding what were show-stopping features or essential functionalities that had to make it for the launch, versus things that would be fine to include afterwards. We’d see which features would be more ‘expensive’ to complete. At this stage the only currency we traded in was time, with everything balanced between time to complete versus its impact on the product.

Conclusion

Some hard decisions were made but ultimately we managed to ship on time and practically feature complete.

We were able to bring to market a product that six weeks prior did not exist as anything but an idea. Everyone at Chartbeat came together to make this a reality, each pulling their own weight and helping one another. Through and through it was an incredible team effort.

Within Chartbeat we managed to create a MVP in record time. We were able to assess client needs and industry gaps to form our product and get it out the door and into client’s hands. We’re not done, but we’re off to a strong start.